Former Chief Investigator and Assoicate Director Dist Prof Peter Corke featured in a Radio National Breakfast segment presented by Sally Sara on 25th November.

Changing Australia: Peter Corke and advancing robotics in Australia – ABC listen

Former Chief Investigator and Assoicate Director Dist Prof Peter Corke featured in a Radio National Breakfast segment presented by Sally Sara on 25th November.

Changing Australia: Peter Corke and advancing robotics in Australia – ABC listen

Congratulations to Chief Investigator Professor Will Browne, whose project ASTRO has taken out the top spot as the 2025 Champion of QUT’s Excolo! pitching competition Grand Final, securing up to $100,000 in investment funding via the Industry Engagement Fund. ASTRO is an Upper Arm Active Stroke Rehabilitation Orthotic that aims to enhance post-stroke recovery while easing demand on healthcare systems.

QUT Excolo! is a pitching competition delivered by QUT’s Office of Industry Engagement: research teams are matched with a commercialisation coach, and participate in pitching workshops in collaboration with QUT Entrepreneurship.

Read more: QUT – News

UTS PhD researcher, Munia Ahamed presented her paper at the 52nd International Conference on Computers and Industrial Engineering (CIE52) on 29th October.

Her paper, entitled A Computational Approach to Quality Dimension Implementation in Industry 4.0:

Integrating DMAIC with Statistical Analysis for Enhanced Defect Management was co-authored by: Nathalie Sick, Matthias Guertler, Mickey Clemon and Mariadas Capsran Roshan.

The paper focused on developing a computational approach for implementing Quality Dimensions in Industry 4.0, integrating DMAIC with statistical modelling to strengthen defect management decision-making.

We’re excited to celebrate three ARC Discovery Project successes! These groundbreaking projects will advance robotics in deformable and unstructured environments and co-design assistive technologies that promote independence and dignity.

Congratulations to all our researchers for securing over $2.1M in funding!

Read the full project list: Discovery Projects 2026 | Australian Research Council

Written by Katia Bourahmoune, UTS & Acting Co-Lead Quality Assurance and Compliance program

Heidegger describes an equipment as ready-to-hand when it disappears into practice, when its use is so seamlessly integrated that it ceases to be an object of thought and becomes instead a transparent extension of action. A hammer is not noticed as a hammer when it drives a nail effectively; it is only when it splinters or slips that it becomes it becomes an object of scrutiny, unready-to-hand, with its use questioned. In modern manufacturing, collaborative robots (cobots) occupy an uneasy position between these two states. They promise repeatability, precision, and tireless monitoring, yet they are undeniably still machines to be supervised, audited, and monitored. In compliance and quality assurance, this human oversight of machines is necessary. Afterall, compliance remains the most human part of the hyper-mechanised modern manufacturing process. This is particularly evident in heavily regulated industries like medical device manufacturing, aviation and defence, where errors are measured not only in costs but in lives and national security.

For cobots to become ready-to-hand, they must be genuinely collaborative: partners in the task rather than peripheral machinery. While collaboration in the context of human-robot interaction is hard to define and evolves as the field advances, it is useful to frame it within the level on interaction between a human and a robot. These levels range from co-existence (shared space, individual actions) to co-operation (shared space, human-guided actions), to collaboration (shared space, joint bi-directional actions). Collaboration through this lens implies shared situational awareness, legible intent, and adaptive action: the robot exposes what it “perceives” (vision, force,…), why it is acting (constraints, goals,…), and how humans can adapt, override, or teach. Such interfaces must preserve human agency and skilled technique while reducing ergonomic and cognitive load. In practice, this means adaptive assistance that yields to expert touch, explanations of proposed actions, and workflows that keep responsibility distributed rather than displaced. When collaboration works this way, it does more than improve throughput; it establishes the preconditions for assurance to be intrinsic rather than supervisory. On this foundation, compliance becomes by design: assurance embedded in action, rather than appended after it. Cobots can inspect as they assemble, verify as they position, and generate audit-grade evidence as a by-product of normal operation. Cobots can extend human judgment through continuous monitoring, allowing human inspectors to concentrate on exceptions, interpretation, and continuous improvement.

This human-robot collaboration fundamentally hinges on trust. In production, workers must believe that a cobot will act predictably and safely; in quality assurance, they must also believe that the cobot’s monitoring and record-keeping are accurate and transparent. Research on automation psychology shows the dangers of both extremes: over-trust leads to blind reliance, while under-trust leads to redundancy and disuse. The literature points to several ways for calibrated trust including reliable and predictable performance, timely feedback, options for human override, transparent explanations of decisions, and auditable records tied to actions, and here we emphasise the compliance-critical elements of legibility, traceability, and contestability. Trust, then, is not an abstract sentiment but a design commitment: when cobots make their intentions legible and their decisions contestable, human operators retain meaningful agency in the loop. This keeps human judgment engaged precisely where it adds the most value. In regulated settings, this turns assurance into a shared practice rather than a supervisory afterthought, and it reorients collaboration toward preserving and amplifying human skill rather than displacing it.

Concerns are often raised that automation “deskills” human labour, relegating workers to passive supervision. Cobots designed for compliance offer the opposite prospect. By taking on repetitive inspection tasks, cobots free human expertise for higher-order judgment: interpreting anomalies, adapting processes, and innovating in response to unforeseen conditions. The skill does not vanish; it is re-centred where it matters most. In this way, cobots not only maintain but actively sustain skill, ensuring that human judgment remains the decisive element in compliance.

The Compliance and Quality Assurance program at the Australian Cobotics Centre aims to develop practical tools that specify, monitor and evaluate human–robot collaboration using multi-modality sensing and AI for assessing compliance.

When cobots are truly ready-to-hand, i.e. useful, trustworthy, and engineered for compliance-by-design, they cease to be mere machines and become true collaborators that elevate human skill while making quality an intrinsic property of every human–robot action.

Further reading:

Heidegger, M. (1962). Being and time. In J. Macquarrie, & E. Robinson, (Trans.), New York, NY: Harper & Row.

Guertler, M., Tomidei, L., Sick, N., Carmichael, M., Paul, G., Wambsganss, A., … & Hussain, S. (2023). When is a robot a cobot? Moving beyond manufacturing and arm-based cobot manipulators. Proceedings of the Design Society, 3, 3889-3898. https://doi.org/10.1017/pds.2023.390

Hancock, P. A., Billings, D. R., & Schaefer, K. E. (2011). A meta-analysis of factors affecting trust in human-robot interaction. Human Factors, 53(5), 517–527. https://doi.org/10.1177/0018720811417254

Carmichael, M. (2023). Can we Unlock the Potential of Collaborative Robots?. Australian Cobotics Centre. https://www.australiancobotics.org/articles/can-we-unlock-the-potential-of-collaborative-robots/

PhD Research Spotlight: Zongyuan Zhang Tackles Contact Tasks with Mobile Robots

As part of the Biomimic Cobots program within the Australian Cobotics Centre, PhD researcher Zongyuan Zhang is leading a project that addresses a key challenge in manufacturing: enabling mobile robots to perform high-precision contact tasks, such as grinding, polishing, and welding, on large, arbitrarily placed workpieces in factory environments.

Zongyuan brings a diverse background in robotics to this work. He holds an M.Sc. in Robotics from the University of Birmingham, UK, where he focused on applying deep learning to manipulator force control. His experience spans control system design, mechanical structure design, and participation in a range of innovative robotics projects—including underwater photography robots, driverless racing cars, exoskeleton mechanical arms, dual-rotor aircraft, and remote-control robotic arms—some of which are now undergoing commercialisation.

His PhD project, Contact Task Execution by Robot with Non-Rigid Fixation, investigates how robots with non-rigidly fixed chassis can maintain the accuracy, stability, and adaptability required for industrial contact tasks. These tasks typically demand hybrid force/position control and high contact forces, which are complicated by the mobility and flexibility of the robot’s base.

This research contributes to the Biomimic Cobots program’s goal of developing collaborative robots that mimic human sensing, learning, and manipulation skills. It explores:

Zongyuan, based at QUT, is supervised by Professor Jonathan Roberts, Professor Will Browne, and Dr Chris Lehnert, and is working onsite at ARM Hub alongside industry partner, Vaulta. The project with industrial partners concerns the efficient and accurate removal of surface oxides from metallic materials, thereby enabling tighter bonding between metal components. This embedded collaboration ensures his research is conducted in real production environments and remains grounded in the practical needs of Australian manufacturers.

Recent milestones include the:

Check out our website for the latest on his project: Project 1.1 – Contact task execution by robot with non-rigid fixation » Australian Cobotics Centre | ARC funded ITTC for Collaborative Robotics in Advanced Manufacturing

On 17th June 2025, our Centre was part of the official opening of the Robotics and Advanced Manufacturing Centre (RAMC) at TAFE Queensland Eagle Farm campus. This 5-Star Green Star-rated facility was officially launched by The Honourable Ros Bates MP, Minister for Finance, Trade, Employment and Training and included Minister Tim Nicholls MP and a talk from ARM Hub‘s Technical Lead, Dr Troy Cordie

As part of the opening, TAFE opened their doors for an Industry Open Day. Centre Director Prof Jonathan Roberts, Centre Manager Merryn Ballantyne, and PhD researcher Jacqueline Greentree spoke with over 150 attendees about our exciting initiatives and upcoming events.

The Open Day also included stands and demonstrations from Mynt Energy Tech, ARM Hub, Aptella, Infinispark, and HFS Design (formerly Micromelon Engineering) providing a showcase of Queensland’s collaborative innovation ecosystem.

Thank you to TAFE Queensland for inviting us to be part of the event and to Benaiah Fenby and team for organising such a wonderful day. The new TAFE Centre is a fantastic step forward in preparing Queensland’s workforce for the future of work in emerging and sustainable industries. And, as one of our Centre’s industry partners, we look forward to continuing to work together to ensure manufacturers are equipped for the next generation of advanced manufacturing.

📸 TAFE Queensland, Merryn Ballantyne, Jonathan Roberts

Written by Dr Alan Burden , QUT Postdoctoral Research Fellow, Designing Socio-Technical Robotics System program.

A colleague recently questioned why we are building robots that look human. If other machines already perform tasks reliably, robots in human shapes reveal more about our expectations rather than about technical necessity. Apart from striving to fulfil sci-fi fantasies, there seems to be little logical reason for many industries to develop humanoids.

Humanoid robots are machines designed to resemble and move like humans, typically featuring an identifiable head, torso, arms, legs, and enabling interaction with people, objects, and environments in human-centred ways.

In 2025, manufacturers are projected to ship approximately 18,000 humanoid robots globally [1], marking a significant step toward broader adoption. Looking ahead, Goldman Sachs forecasts that by 2035, the humanoid robot market could reach USD $38 billion (approximately AUD $57 billion), with annual shipments increasing to 1.4 million units [2]. Further into the future, Bank of America projects that by 2060, up to 3 billion humanoid robots could be in use worldwide, primarily in homes and service industries [1].

From Tesla’s Optimus Gen 2 [3] to Figure AI’s Figure 02 [4], the humanoid robot is no longer a figment of science fiction. These robots will walk, lift, talk, and perform factory tasks. Yet beneath the surface of innovation lies a deeper question: Will we build humanoid robots because the human form is genuinely useful, or because it reflects our own image back at us?

In an age where industrial arm robots, wheeled and tracked platforms, and flying drones already perform industrial tasks with precision, the humanoid form can seem like an odd choice. These robots will be complex, expensive to develop, and often over-engineered for the roles they are expected to perform.

So what explains the current fascination with building robots in our own image?

Form vs Function: The Practical Debate

Our world is designed around the human body. Door handles, tools, staircases, and car pedals all presume a body with arms, legs, and binocular vision. Humanoid robots will therefore adapt more easily to our environments.

Still, there is a contradiction worth unpacking. We already have machines that operate far more efficiently without the constraints of two legs and a torso. Amazon’s warehouse bots glide on wheels, carrying shelving units with speed and precision [5]. Boston Dynamics’ Spot, a quadruped, excels at inspections and terrain navigation [6]. Agility Robotics’ Digit uses bipedal bird-like legs to move efficiently through human-centric spaces [7].

Humanoid robots won’t necessarily be more capable but may be more compatible with existing environments, especially where infrastructure redesign would be costly or disruptive. This compatibility advantage will be what Stanford’s Human-Centred AI Institute describes as the affordance of embodied compatibility rather than pure efficiency [8].

The Psychological Shortcut

People respond to humanoid forms with startling immediacy. A robot with a face, a voice, and gestures doesn’t just operate in our space – it socially occupies it.

That connection brings both benefits and barriers. Humanoid robots will be easier to instruct, cooperate with, or trust, especially in care or customer service roles. This intuitive rapport, however, will come at a cost. We’ll also project emotions, intentions, and even moral status onto these mechanical beings. The IEEE’s Global Initiative on Ethics of Autonomous Systems [9] has warned that anthropomorphic design risks confusion over autonomy, trust, and accountability.

A robotic arm making a mistake will seem tolerable. A robot with eyes and facial features doing the same will feel uncanny. The “uncanny valley”, a term coined by roboticist Masahiro Mori in 1970 to describe the discomfort people feel when a robot or virtual character looks almost human, but not quite [10] – will blur the line between tool and companion, worker and being.

Redefining Labour and Power

Humanoid robots will often be pitched as general-purpose labourers: tireless, adaptable, and compliant. In some ways, they’ll echo the 19th-century industrial ideal of the perfect worker.

But this vision raises complex questions. If these machines replace humans in repetitive or hazardous roles, how will we protect the dignity and security of displaced workers? If a robot becomes a “colleague,” what responsibilities will come with that illusion?

The Future of Humanity Institute at Oxford [11] noted that humanoids could contribute to a shift in how we view authority and social dynamics. If robots are always obedient, will we begin to expect the same from people? Automation will soon shape not just job loss, but workplace culture and human behaviour. This connects with human-robot interaction research on anthropomorphic framing and robot deception, which cautions against uncritically assigning social roles to machines [11].

Who Are We Really Building?

At its core, the humanoid robot reflects our self-image. When Boston Dynamics’ Atlas robot performed parkour in a now-iconic demonstration video [12], public fascination was less about mechanics and more about the eeriness of watching something mechanical move with such human-like agility. The video, titled Atlas – Partners in Parkour, showcased robots jumping, flipping, and vaulting through a gymnastics course which triggered both admiration, unease and a wave of social media memes drawing comparisions with Terminator films.

This is not new. From clockwork automatons in royal courts to androids in science fiction, each era’s robots mirror its anxieties and desires. For instance, Hanson Robotics’ Sophia [13] was designed with expressive facial features to promote naturalistic interaction, yet remains polarising and dismissed as novelty. Is it an advancement in social robotics or a symbol of anthropomorphic overreach?

The goal of today’s humanoids reveals our priorities. Tesla’s Optimus will be built to handle repetitive factory work. Figure AI’s humanoids will aim to integrate into warehouse workflows. These designs won’t just be technical – they will symbolise which human qualities we value and which jobs we are ready to relinquish.

The Real Question

As mechanical humans enter our homes and workplaces, we must ask what they will symbolise beyond their specs. Humanoid robots will reflect assumptions about work, social interaction, and human worth. When we automate tasks in human form, we choose which parts of ourselves we replicate and which we outsource.

The most pressing questions won’t be about joint torque or facial recognition, but about how these machines reshape our relationships with technology, labour, and each other. Robots, like all tools, embody human intention. The challenge isn’t building minds like ours, but questioning why we keep giving them our face.

References

[1] Koetsier, J. (2025, April 30). Humanoid robot mass adoption will start in 2028, says Bank of America. Forbes. https://www.forbes.com/sites/johnkoetsier/2025/04/30/humanoid-robot-mass-adoption-will-start-in-2028-says-bank-of-america/

[2] Goldman Sachs. (2024, January 8). The global market for humanoid robots could reach $38 billion by 2035. https://www.goldmansachs.com/insights/articles/the-global-market-for-robots-could-reach-38-billion-by-2035

[3] Tesla. (2023). Tesla Optimus: Our Humanoid Robot. https://www.tesla.com/AI

[4] Figure AI. (2024). Figure 02 Robot Overview. https://www.figure.ai/

[5] Amazon. (2025). Facts & figures: Amazon fulfillment centers and robotics. https://www.aboutamazon.co.uk/news/innovation/bots-by-the-numbers-facts-and-figures-about-robotics-at-amazon

[6] Boston Dynamics. (2025). Spot | Boston Dynamics. https://bostondynamics.com/products/spot/

[7] Agility Robotics. (2025). Digit – ROBOTS: Your Guide to the World of Robotics. https://www.agilityrobotics.com/

[8] Srivastava, S., Li, C., Lingelbach, M., Martín-Martín, R., Xia, F., Vainio, K., Lian, Z., Gokmen, C., Buch, S., Liu, K., Savarese, S., Gweon, H., Wu, J., & Fei-Fei, L. (2021). BEHAVIOR: Benchmark for Everyday Household Activities in Virtual, Interactive, and Ecological Environments. arXiv preprint arXiv:2108.03332. https://arxiv.org/abs/2108.03332

[9] IEEE Global Initiative on Ethics of Autonomous and Intelligent Systems. (2020). Ethically Aligned Design, 1st ed. https://ethicsinaction.ieee.org/

[10] Mori, M. (1970). The uncanny valley. Energy, 7(4), 33–35. (English translation by MacDorman & Kageki, 2012, IEEE Robotics & Automation Magazine). https://doi.org/10.1109/MRA.2012.2192811

[11] Brundage, M., Avin, S., Clark, J., Toner, H., Eckersley, P., Garfinkel, B., … & Amodei, D. (2018). The Malicious Use of Artificial Intelligence: Forecasting, Prevention, and Mitigation. Future of Humanity Institute, University of Oxford. Retrieved from https://arxiv.org/abs/1802.07228

[12] Boston Dynamics. (2021, August 17). Atlas | Partners in Parkour [Video]. YouTube. https://www.youtube.com/watch?v=tF4DML7FIWk

[13] Hanson Robotics. (2025). Sophia the Robot. https://www.hansonrobotics.com/sophia/

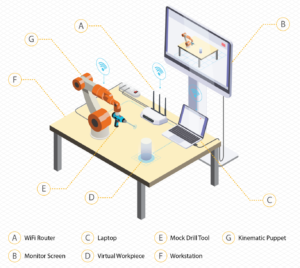

A tangible, adaptable and modular interface for embodied explorations of human-robot interaction concepts.

As robots become increasingly integrated into various industries, from healthcare to manufacturing, the need for intuitive and adaptable tools to design and test robotic movements has never been greater. Traditional approaches often rely on expensive simulations or complex hardware setups, which can restrict early-stage experimentation and limit participation from non-expert stakeholders. The kinematic puppet offers a refreshing alternative by combining hands-on prototyping with virtual simulation, making it easier for anyone to explore and refine robot motion. This work is particularly critical for exploring intuitive ways surgeons can collaborate with robots in the operating room, improving Robot-Assisted Surgery (RAS).

What is the kinematic puppet?

The kinematic puppet is an innovative tool that combines physical prototyping and virtual simulation to simplify the design and testing of robot movements and human-robot interactions. The physical component is a modular puppet constructed from 3D-printed joints equipped with rotary encoders and connected by PVC linkages. This flexible and cost-effective setup allows users to customise a robot arm to suit a variety of needs by adjusting linkage lengths and joint attachments.

On the digital side, a virtual simulation environment (developed in Unreal Engine) creates a real-time digital twin of the physical puppet. This integration via Wi-Fi/UDP enables immediate visualisation and testing of HRI concepts. By bridging the gap between physical manipulation and digital analysis, the kinematic puppet makes it easier for anyone to experiment with and refine robot motion in an interactive and accessible way.

How does the user interact with the puppet?

In the demonstration, users engage with the system by physically manipulating the kinematic puppet to control a digital twin of the robot arm, guiding it through a virtual cutting task. As they direct the arm’s movements, a virtual cutting tool simulates material removal in real time.

The system provides continuous feedback through both visual displays and haptic responses, creating an immersive and intuitive experience. This interactive environment challenges participants to balance precision and speed, highlighting the importance of both accuracy and efficiency in robotic tasks.

By making the abstract process of programming robotic movements tangible, the kinematic puppet empowers users to experiment and learn in a dynamic environment.

Demonstration at HRI 2025 – An experience for HDR students.

Presenting the Kinematic Puppet at the Human-Robot Interaction Conference 2025 provided valuable insights into how our research resonates with the broader robotics community. Attendees were particularly drawn to the system’s modularity and reconfigurability and appreciated the puppetry-based approach as an intuitive method for exploring human-robot interaction concepts.

The demonstration wasn’t without challenges. Technical issues before the demo required some mildly frantic rebuilding of the code solution the morning of, highlighting a common research reality: experimental prototypes often accumulate small bugs through iterative development that compound unexpectedly. An all-too-common challenge that reflects the messy nature of research and something that isn’t always visible in polished publications.

Reviewer feedback highlighted potential applications we hadn’t considered, particularly around improving accessibility of research technologies. While most attendees engaged enthusiastically with the concept, some appeared to struggle to connect it to their work. It took time for me to find effective ways to explain the purpose and value of the approach—a good reminder that not every method resonates equally in a diverse field and how important it is to tailor explanations to your audience, even within a given research community.

For an HDR student, this experience underscores the importance of exposing your work to the research community early. The value lies not in positive reception, but in the process of presenting the work itself. Getting to explain my work to others forced me to articulate and refine my thinking, an opportunity that is missed when work is conducted in isolation. These interactions helped me understand how my work fits within the broader landscape and sparked new reflections on its purpose and potential applications that I might have missed otherwise.

You can read more about this demo here: https://dl.acm.org/doi/10.5555/3721488.3721764

Dwyer, J. L., Johansen, S. S., Donovan, J., Rittenbruch, M., & Gomez, R. (2025). What Would Jim Henson Do? Roleplaying Human-Robot Collaborations Through Puppeteering Proceedings of the 2025 ACM/IEEE International Conference on Human-Robot Interaction, Melbourne, Australia.

TL; DR.

The Australian Cobotics Centre successfully hosted its first Industry Symposium and Mini-Expo on Thursday, 5th December 2024, at the QUT Kelvin Grove campus in Brisbane. This engaging event, sponsored by ARM Hub AI Adopt Centre and the Queensland Government’s Department of Natural Resources and Mines, Manufacturing and Regional and Rural Development, brought together over 100 manufacturers, researchers, and industry professionals to explore the latest advancements in advanced manufacturing technologies and discuss the evolving role of humans in the future of manufacturing.

Professor Cori Stewart, CEO of ARM Hub gave a keynote talk about the current state of Australian manufacturing and how AI can enhance productivity.

SME Experiences of R&D in shower base manufacturing, Rebecca Lowery, Universal Shower Base and Dr Mariadas Roshan, Swinburne University of Technology

Lessons learned from the implementation of Collaborative Robotics in surgery, Dr Tom Williamson, Stryker

Tools to utilise data for decision making, Dr Roozbeh Derakhshan, ARM Hub AI Adopt Centre

Insights from international experiences with cobots in manufacturing, Prof Rainer Mueller, ZeMA

One of the standout moments of the event was the panel discussion facilitated by Professor Jonathan Roberts, Director of the Australian Cobotics Centre. Panelists included:

This lively discussion, facilitated by Centre Director, Professor Jonathan Roberts, explored the transformative impact of technologies like AI and humanoid robots on manufacturing. The panel also addressed the importance of maintaining a human-centered approach in an increasingly automated industry, sparking thought-provoking dialogue among attendees.

The mini-expo was a highlight for many participants, offering hands-on experiences with cobotic technologies. Live research demonstrations and stands from exhibitors including DCISIV Technolgogies, Queensland XR Hub, ARM Hub AI Adopt Centre, the Queensland Government’s Department of Natural Resources and Mines, Manufacturing and Regional and Rural Development showcased the potential of these innovations to enhance productivity, improve workplace safety, and support the competitiveness of Australian manufacturers.

Events like this symposium play a crucial role in strengthening industry connections and disseminating research outcomes.

For those who couldn’t attend, stay tuned for future events and opportunities to engage with the Centre’s groundbreaking work. Check out our program and project pages to learn more about our ongoing projects and upcoming initiatives.

We extend our gratitude to all speakers, panelists, and attendees who made the 2024 Industry Symposium a resounding success.